September 16, 2023

Table of Contents

Weekend Experiments

Weekends are my time to explore. My homelab runs a full generative AI stack - models, workflows, UIs - and I use that freedom to experiment with things that commercial services either do not offer or charge a premium for. This particular weekend, I dove into two areas: image generation with ComfyUI and custom AI chat characters powered entirely by local models.

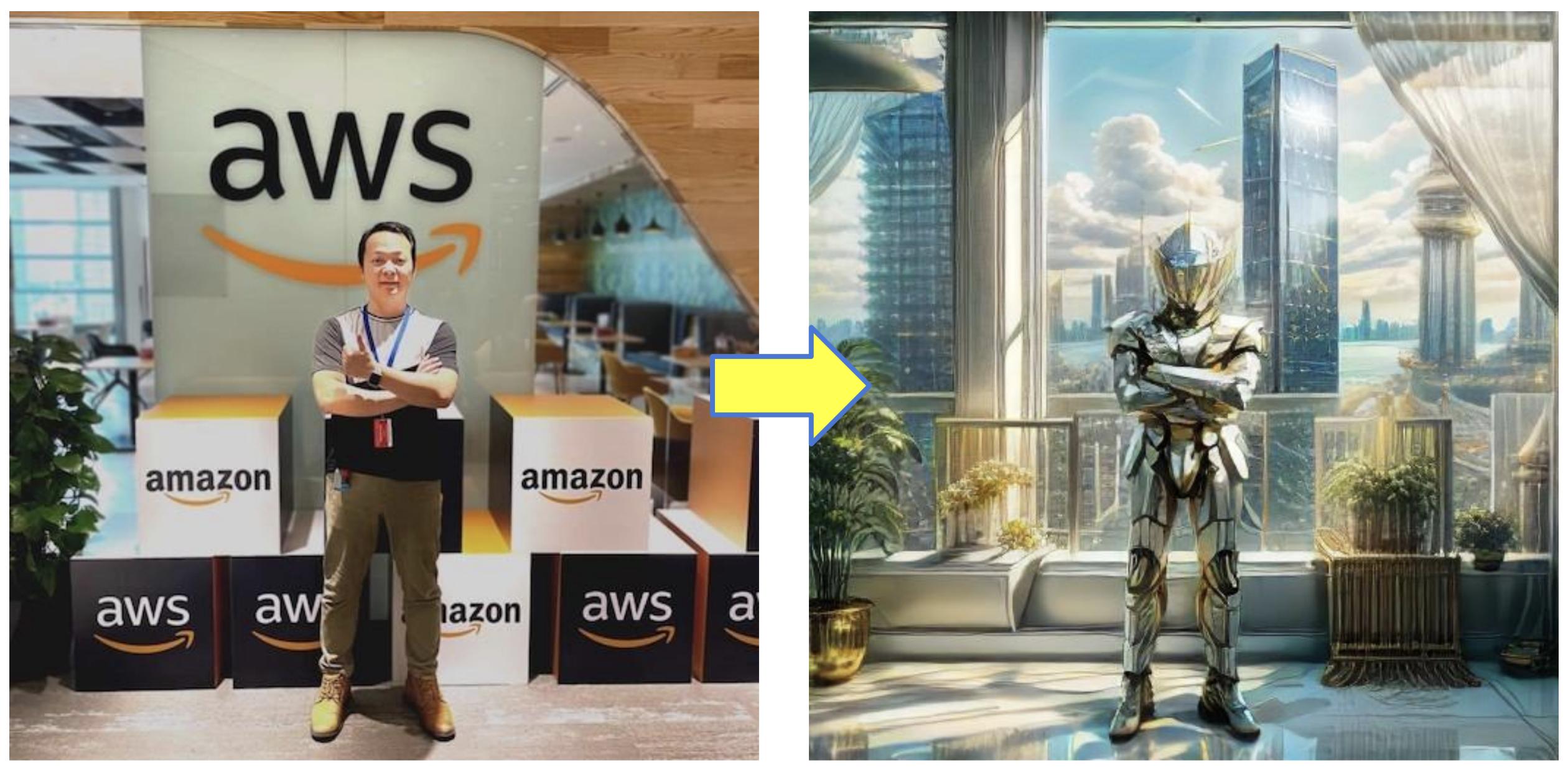

Transforming Images with Img2Img

The first experiment used a technique called Img2Img (Image-to-Image) generation. You start with a base image and let the AI generate variations based on it - same composition, completely different artistic style.

The results were genuinely impressive. From a single base image, the model produced unique variations, each with its own artistic interpretation. What would take a human artist hours to conceptualize, the AI iterated through in minutes.

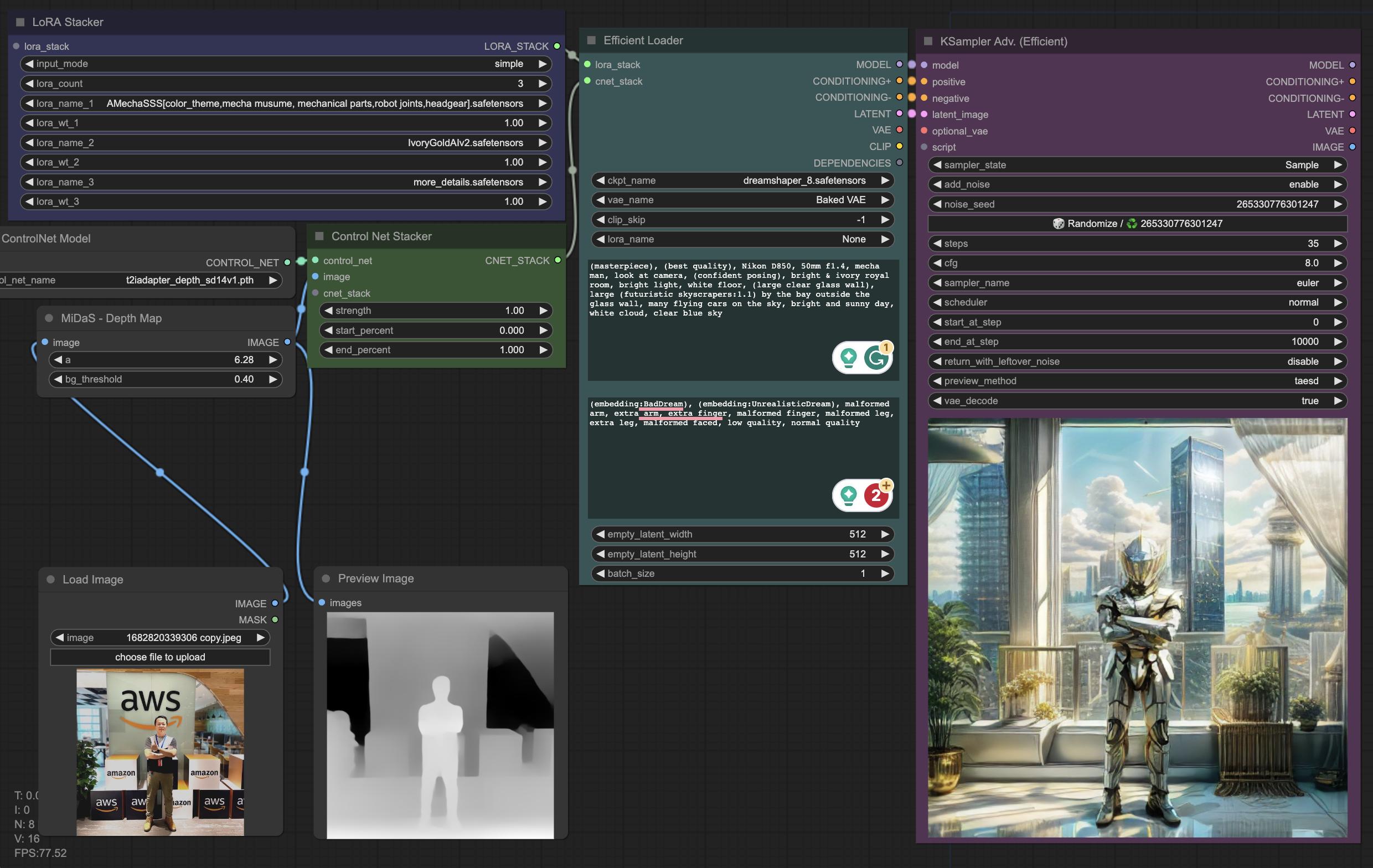

The ComfyUI Workflow

The key to repeatable results is a well-structured workflow. I used ComfyUI - a node-based UI for Stable Diffusion that gives you granular control over every step of the generation pipeline.

Here is the pipeline I settled on:

| Step | What It Does | Tool/Model |

|---|---|---|

| 1. Load base image | Starting point for the transformation | Any image |

| 2. Pre-processing | Generate a depth map to guide the AI’s composition | Depth Map node |

| 3. Checkpoint selection | Load a pre-trained model as the generation foundation | DreamShaper |

| 4. Prompt engineering | Positive and negative prompts to steer style and content | Text nodes |

| 5. Post-processing | Enhance quality and refine details | LoRAs (Low-Rank Adaptation) |

The depth map step is critical - it tells the model where things should be in the frame without constraining what they look like. This gives you creative freedom while maintaining compositional coherence.

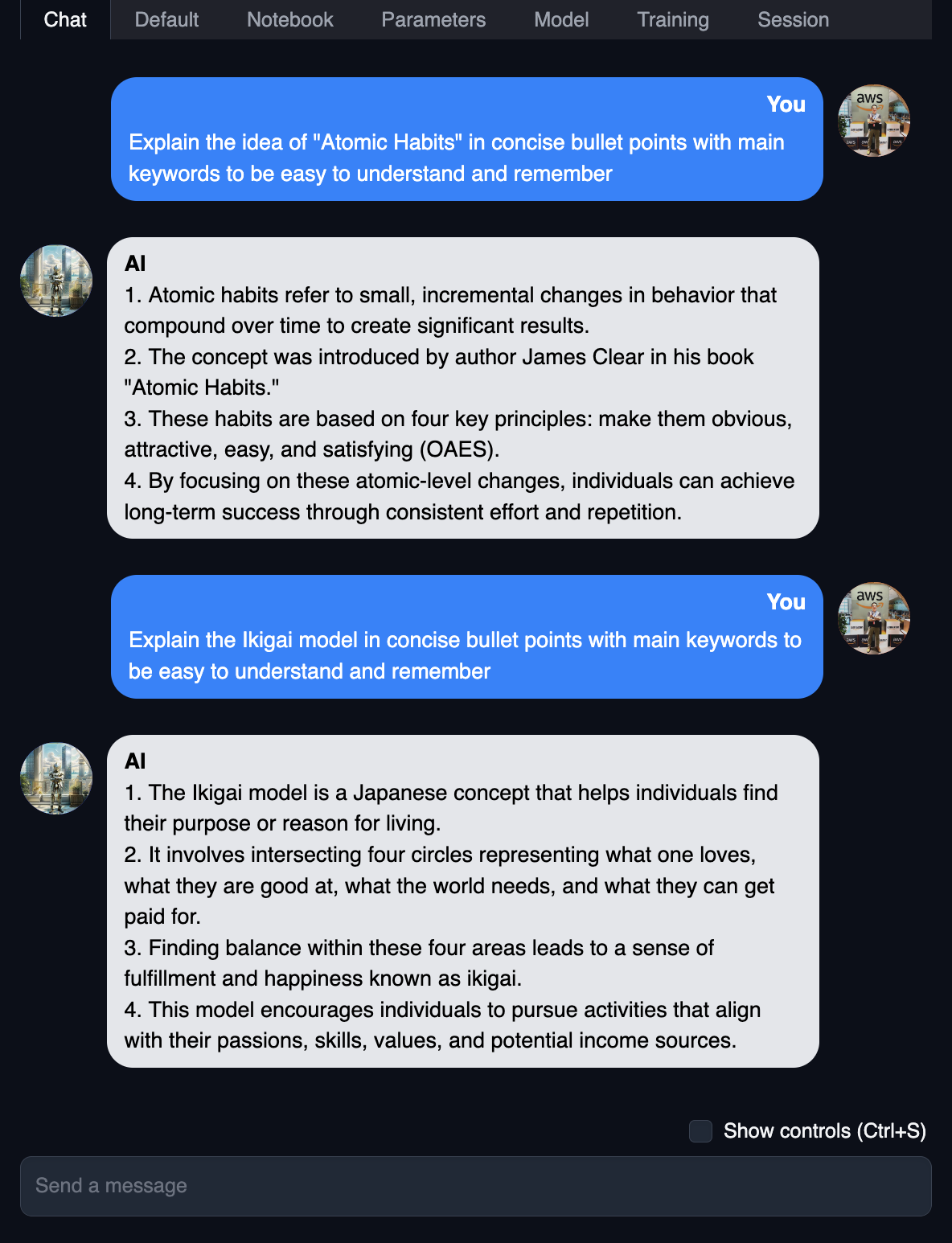

Custom Chat Characters

The second experiment combined the generated image with a local chat model to create a custom AI character. I used the newly generated artwork as an avatar in my self-hosted chat interface:

The chat was powered by Marcoroni-13B, quantized into GGUF format by Tom Jobbins. Based on Llama 2, it was one of the highest-scored models on the Open LLM Leaderboard at the time.

Running the chat model locally meant zero API costs, zero data leaving my network, and complete freedom to customize the character’s personality and behaviour. The combination of a custom-generated avatar with a locally-hosted language model created a surprisingly engaging and immersive experience.

What I Learned

- Self-hosted GenAI is genuinely fun. The freedom to experiment without API costs or data privacy concerns changes what you are willing to try.

- ComfyUI’s node-based approach is powerful. Once you build a workflow, it is reproducible and tweakable. Far better than prompt-and-pray.

- Local models are good enough for creative work. You do not need GPT-4 or Claude for character chat or artistic generation. A well-quantized 13B model on consumer hardware delivers solid results.

- The creative loop is tight. Generate an image, use it as a character avatar, iterate on the personality - all within minutes, all on your own hardware.

If you have a homelab or even a decent GPU, I highly recommend spending a weekend exploring what self-hosted GenAI can do. The barrier to entry has never been lower, and the creative possibilities are genuinely exciting.

Share :

You May Also Like

The Modern Leader

The False Dichotomy A bad manager sacrifices people for numbers. A good manager sacrifices numbers for people.

Read More

Transforming Industries with Text and Image Generative AI

A Real-World Example Consider a scenario that illustrates where generative AI delivers genuine value. An online grocery platform wants to help busy professionals eat healthier. Using text generation, …

Read More