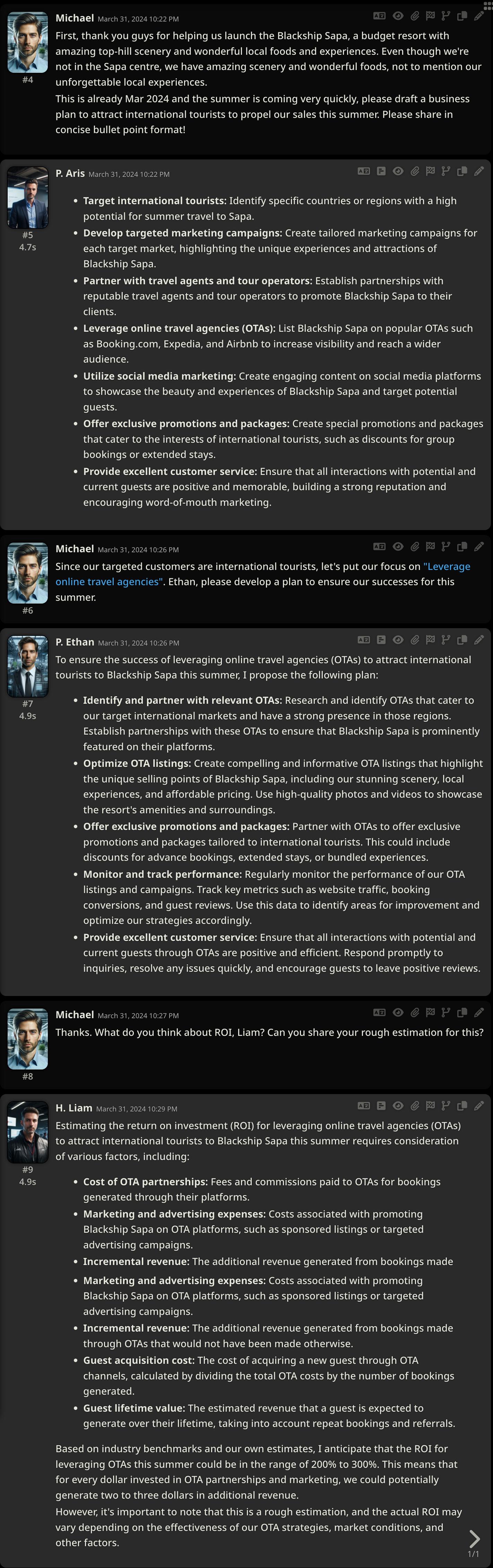

March 31, 2024

Table of Contents

I spent months using ChatGPT and local LLMs as standalone assistants. They were impressive, but something kept bothering me. I would ask one model for a business strategy and get a decent plan - but no market analysis, no financial projection, no execution roadmap. Then I would re-prompt the same model for each of those missing pieces, and each response would ignore what came before. It felt like asking one person to be a marketer, strategist, and financial analyst all at once. The quality ceiling was obvious.

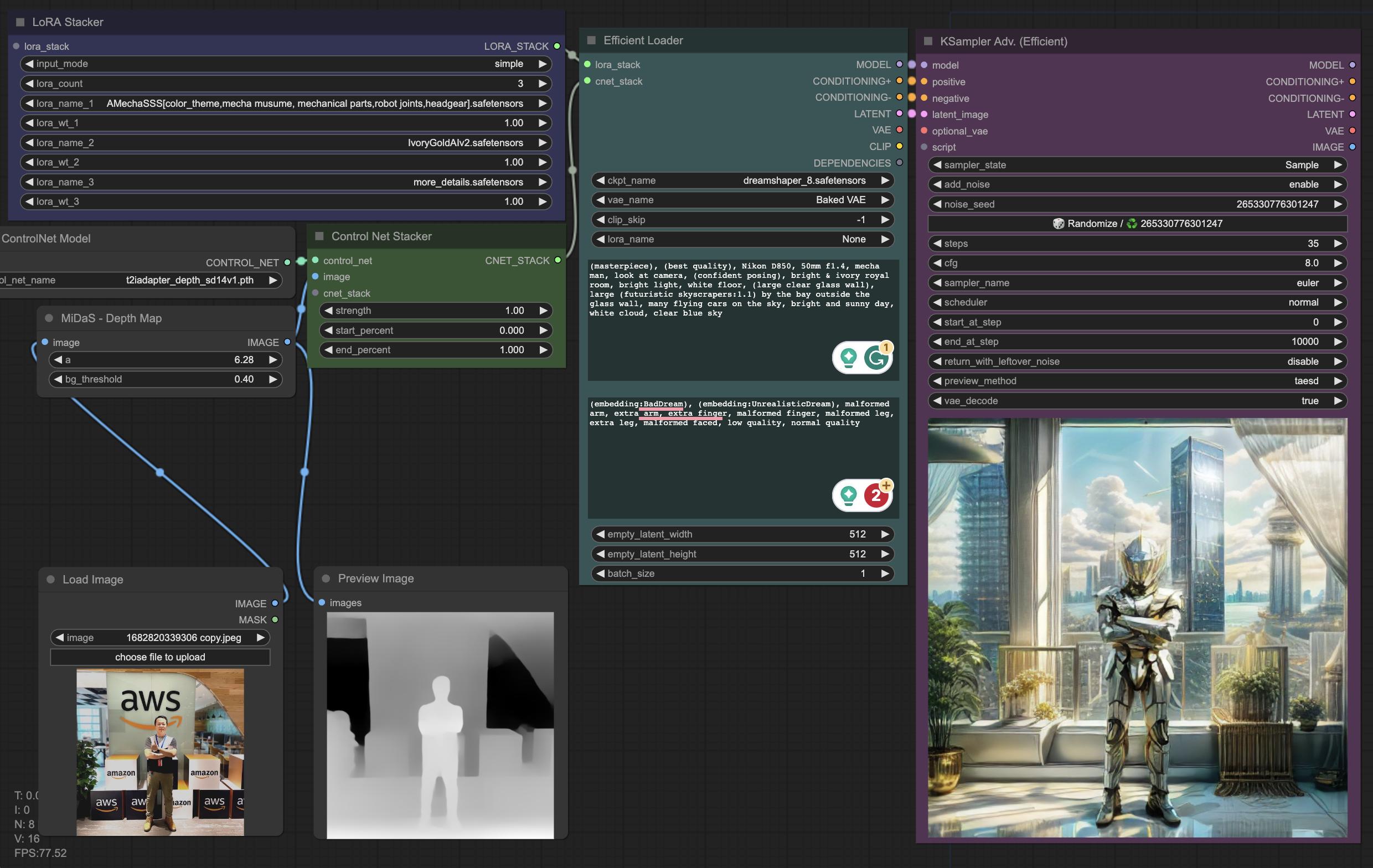

So I built something different. On my homelab, I set up a team of specialist AI agents, each with a distinct role, collaborating through a shared conversation. The shift from “one smart assistant” to “a team of focused specialists” fundamentally changed how I think about AI capability.

flowchart TD

U[Human Orchestrator] --> A[P. Aris - Marketing]

U --> B[P. Ethan - Strategy]

U --> C[H. Liam - Finance]

A -->|contributes| S[Synthesized Plan]

B -->|contributes| S

C -->|contributes| S

Why Does a Single AI Hit a Ceiling?

Most AI assistants today function as standalone, generalized models - one brain trying to handle everything from marketing strategy to financial analysis to code review. Their breadth is impressive, but this one-size-fits-all approach inherently limits depth. Research from Microsoft on multi-agent frameworks like AutoGen shows that specialized agents working together consistently outperform single-agent approaches on complex tasks.

The fundamental problem is context. A single model asked to “create a marketing plan” will produce a surface-level response because it is simultaneously trying to research markets, design campaigns, estimate budgets, and plan execution in one pass. It cannot go deep on any dimension without losing the others. Separating these concerns into distinct agents - each with their own context and expertise boundaries - mirrors how effective human teams actually operate.

What Does Specialization Look Like in Practice?

To illustrate, consider a real scenario. I asked my agent team: “Create a plan to leverage online travel agencies to attract international tourists to Blackship Sapa this summer.”

Here is what happened:

| Agent | Role | What It Actually Did |

|---|---|---|

| P. Aris (Marketing) | Identifies target markets | Listed specific countries, proposed tailored campaigns per market, recommended OTA platforms like Booking.com and Expedia |

| P. Ethan (Strategy) | Designs the execution plan | Proposed OTA partnership strategy, defined OTA listing optimization, outlined promotion packages |

| H. Liam (Finance) | Estimates the ROI | Calculated OTA commission costs, customer acquisition costs, projected 200-300% ROI range |

Each agent brought a different lens. The marketing agent did not try to calculate ROI; the finance agent did not try to design campaigns. They contributed their piece, and I - the human orchestrator - guided the conversation and synthesized. The result was a multi-layered business plan that no single AI could produce in one pass.

What makes this work is the scope boundaries. When I defined each agent, I was explicit about what it should and should not do. The marketer identifies opportunities; the strategist designs execution; the analyst validates financially. These distinctions prevent the overlap that turns multi-agent systems into expensive chaos.

Where Do Teams Beat Individuals?

Just as human teams comprising experts from diverse backgrounds tackle complex challenges more effectively, AI agent teams combine unique strengths. But the advantage is not uniform - it shows up most in specific scenarios:

| Scenario | Why Teams Win | What a Single Agent Misses |

|---|---|---|

| Corporate Strategy | Marketing, operations, finance, and domain experts co-create holistic plans | Trade-offs between departments, second-order effects |

| Technical Architecture | Research, design, implementation, and review happen in parallel | Integration risks, testing strategy, operational concerns |

| Content Creation | Ideation, research, writing, and editing as distinct stages | Consistency, fact-checking depth, structural integrity |

| Decision Making | Multiple perspectives surface hidden trade-offs | Confirmation bias, blind spots in reasoning |

The key insight: the path forward is not pursuing an omnipotent, one-size-fits-all AI. It is fostering ecosystems of specialized agents that collaborate, challenge each other’s assumptions, and produce solutions transcending what any individual could develop alone. But building these teams introduces its own set of challenges.

What Are the Hard Engineering Problems?

Coordinating AI teams is not plug-and-play. Running this setup daily has taught me where the real friction lives:

| Challenge | Why It’s Hard | What I’ve Learned |

|---|---|---|

| Knowledge Integration | Agents must share and build on each other’s outputs across domains | Shared memory and explicit context passing between agents are essential |

| Dynamic Interaction | Fluid dialogue, clarifying questions, conversational control transfer | Agent hierarchy with clear delegation protocols works well |

| Task Decomposition | Breaking complex tasks into sub-tasks for the right specialist | Clear task framing by the human orchestrator determines everything else |

| Domain Grounding | Each agent needs deep understanding of its specialty boundaries | System prompts and skill definitions must be explicit and tested |

| Human-AI Teaming | Humans must guide AI teams while maintaining oversight | Transparent UI showing sub-agent activity is non-negotiable |

The last point deserves emphasis. A transparent agent hierarchy - seeing which sub-agents are active, what tool calls are being made, what each agent contributed - is not a nice-to-have. It is essential for trust. Without visibility into the orchestration, you cannot verify that the right specialist handled the right sub-task. And trust is what determines whether you actually rely on the system or quietly go back to doing everything manually.

Key Takeaways

- Specialist agents outperform generalist ones on complex, multi-faceted tasks. The depth gained from focus more than compensates for the coordination overhead.

- Clear scope boundaries prevent overlap. Define what each agent should not do just as carefully as what it should do.

- The orchestrator role is the most critical. Whether human or AI, the orchestrator decides which specialist handles each sub-task and how to synthesize their outputs into a coherent result.

- Transparency enables trust. If you cannot see what each agent did, you cannot verify the result. This is the difference between a useful system and an expensive toy.

- This mirrors effective human teams. The best engineering orgs do not have one person doing everything - they have specialists who collaborate with clear interfaces. AI teams work the same way.

Your homework: pick one task you do regularly that involves multiple types of thinking - research, design, analysis, execution. Instead of asking one AI to do it all in one shot, break it into those distinct phases and prompt separately for each, feeding the output of one into the next. The quality difference will surprise you - and that is the core principle behind collaborative AI, even without the multi-agent infrastructure.

Share :

You May Also Like

The Modern Leader

There’s a pattern I’ve seen play out more than once. A team ships a big release, leadership celebrates, and within weeks the strongest engineers start disengaging. They become quieter in …

Read More

Fun Things To Do With Self-Hosted Generative AI

Weekends are my time to break things and build things. My homelab runs a full generative AI stack - models, workflows, UIs - and I use that freedom to experiment with stuff that commercial services …

Read More