May 9, 2023

Table of Contents

The Journey Begins

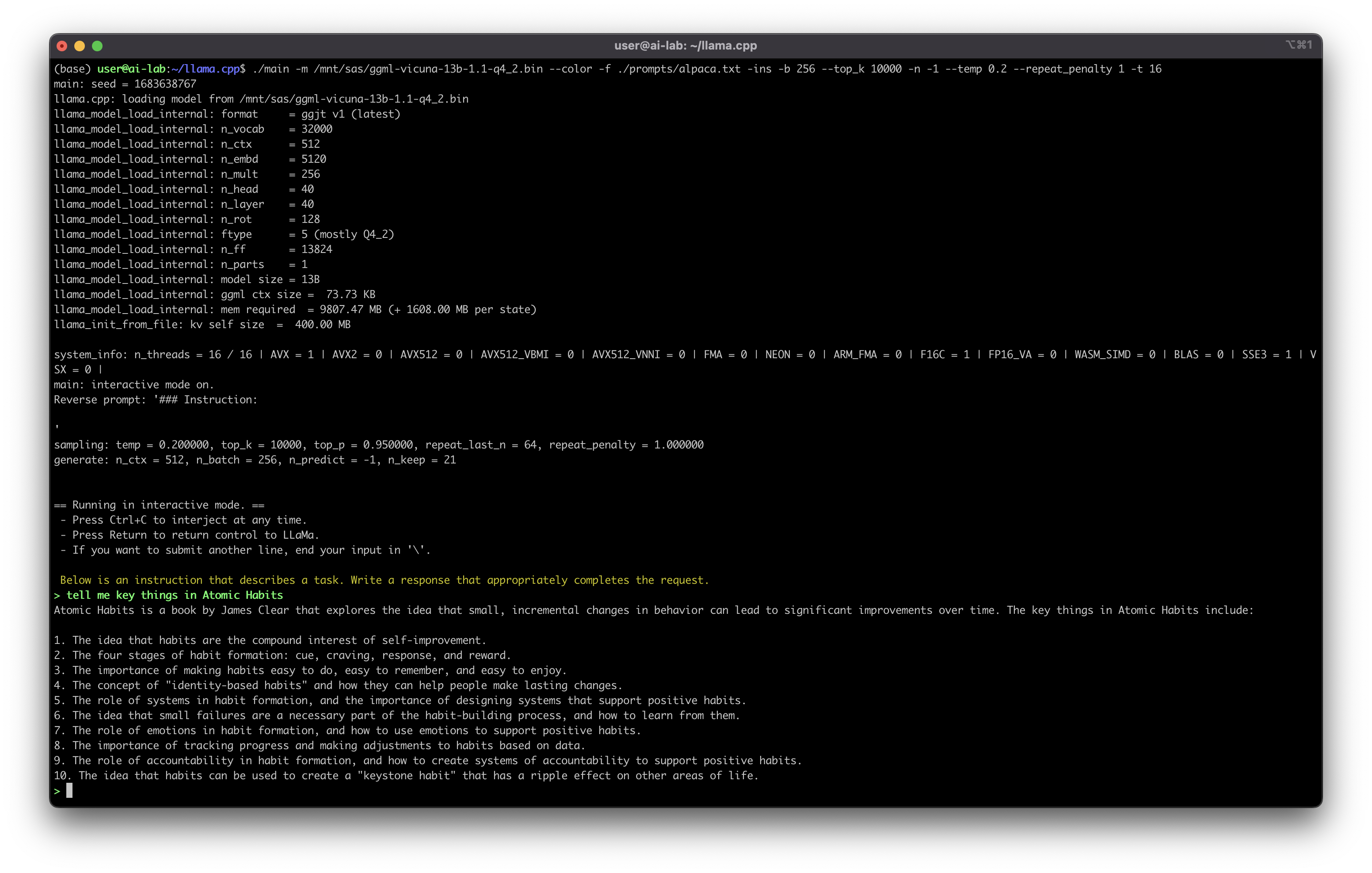

A few weeks ago, I managed to install a self-hosted version of ChatGPT on my homelab - the LLaMA model, open-sourced by Meta. I started with Alpaca-6B. The speed was acceptable, but the response quality was underwhelming.

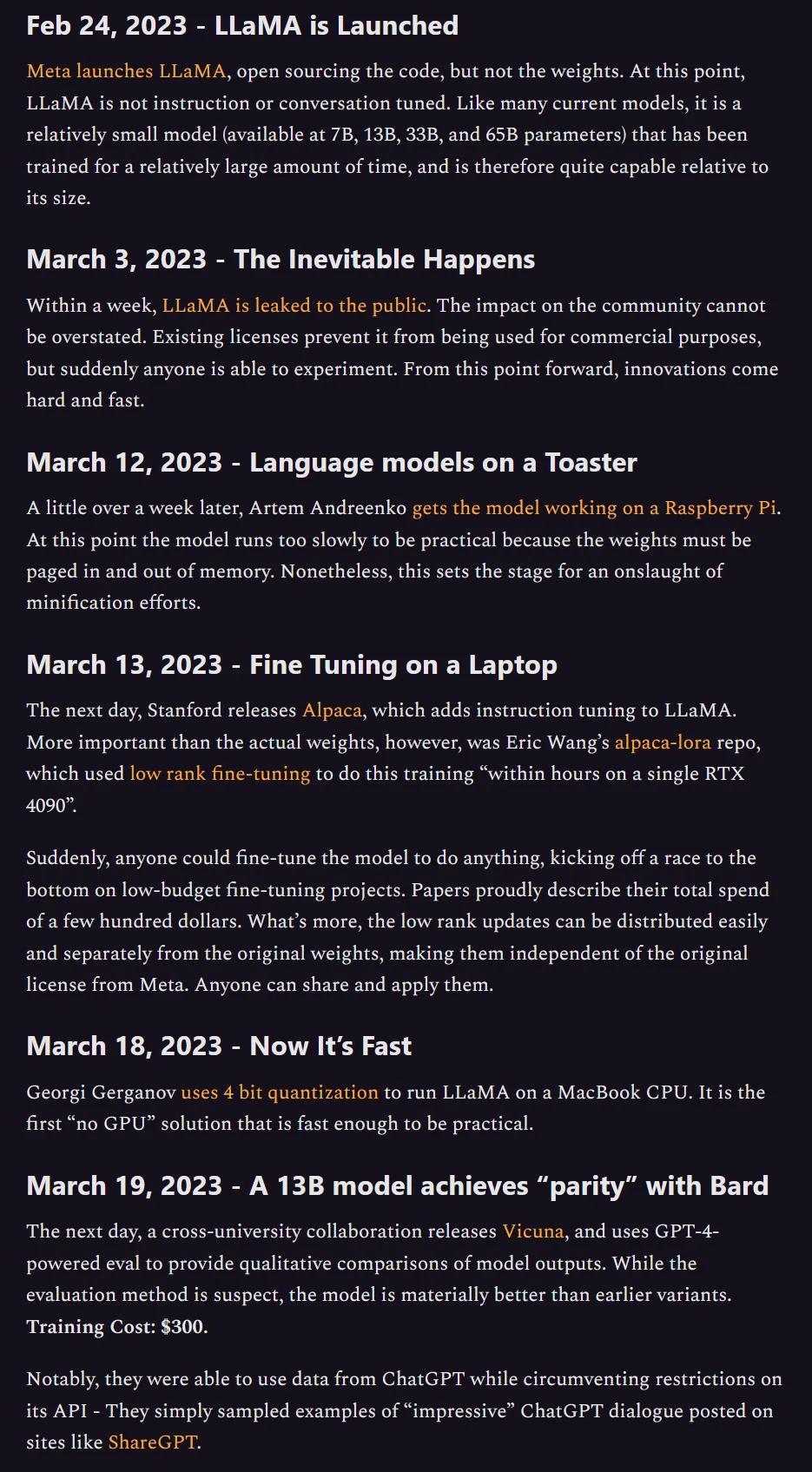

Recently, I upgraded to Vicuna-13B, claimed to deliver 92% of ChatGPT’s quality:

This model was also mentioned in the now-famous leaked Google memo that argued open-source models were rapidly closing the gap with proprietary ones:

The memo’s core thesis - that neither Google nor OpenAI has a lasting moat against open-source - has proven remarkably prescient. This trend creates a real opportunity for anyone willing to run their own infrastructure.

Why Self-Hosting Matters

The case for self-hosted LLMs is not theoretical for me - I run them daily. Here is what makes it compelling:

| Advantage | Cloud ChatGPT | Self-Hosted |

|---|---|---|

| Data security | Data on third-party servers | Data never leaves your network |

| Customization | Limited to provider’s features | Full control over model, prompts, fine-tuning |

| Scalability | Determined by provider | Scale on your own terms |

| Cost model | Recurring subscription/API fees | One-time hardware + electricity |

| Availability | Dependent on provider uptime | You control uptime and maintenance |

| Privacy | Provider’s privacy policy applies | Your data, your rules |

The data security point deserves emphasis. With cloud-based ChatGPT, every conversation touches someone else’s infrastructure. For personal use, that may be acceptable. For anything involving proprietary business data, intellectual property, or sensitive personal information, it is a genuine risk that many people underestimate.

Real Use Cases

The generic “use AI for everything” advice is not helpful. Here are the use cases I have seen deliver real value - both on my homelab and in professional contexts:

For Individuals and Homelabbers

- Personal AI assistant - Automate daily tasks, manage schedules, draft communications. Running locally means your personal context stays private.

- Learning companion - Ask questions about complex topics, get explanations tailored to your level. No usage caps, no subscription fees.

- Creative collaborator - Generate character backstories, brainstorm ideas, or role-play scenarios with custom-configured characters and personalities.

For Organizations

- Technical support assistant - A 24/7 first-line responder for IT queries. Trained on internal documentation, it handles common questions and escalates edge cases. Self-hosted means your IT infrastructure details never leave the building.

- Knowledge management system - A centralized Q&A engine drawing from internal sources. Employees get instant answers to frequently asked questions without searching through wikis and Confluence pages.

- Business intelligence assistant - Real-time insights and trend analysis from company data sources. Leaders get actionable intelligence through natural language queries instead of waiting for analyst reports.

- Onboarding and training - An interactive assistant that guides new employees through processes, answers role-specific questions, and tracks learning progress.

flowchart TD

A[Self-Hosted LLM] --> B[Personal Use]

A --> C[Organization Use]

B --> D[AI Assistant]

B --> E[Learning Companion]

B --> F[Creative Collaborator]

C --> G[Tech Support]

C --> H[Knowledge Management]

C --> I[Business Intelligence]

C --> J[Training & Onboarding]

What I Learned

Running open-source LLMs on my own hardware taught me several things:

- Quality is improving fast. The gap between open-source and proprietary models narrows with every release. What was 60% of ChatGPT quality six months ago is now 90%+.

- Hardware matters, but less than you think. A well-quantized 13B model runs comfortably on consumer hardware. You do not need a data centre.

- The real value is in customization. Fine-tuning, custom system prompts, and domain-specific knowledge bases transform a generic chatbot into something genuinely useful.

- Self-hosting is a mindset. It requires more effort upfront, but the long-term benefits in privacy, cost, and control are substantial.

What Is Next

The trend of open-source LLM development will not slow down. As models get smaller and more efficient, the hardware bar drops further. The question is not whether self-hosted AI will be viable for most organizations - it is when they will start taking it seriously.

For anyone running a homelab or managing infrastructure, I strongly recommend experimenting with self-hosted LLMs. The learning curve is manageable, the costs are predictable, and the capabilities are genuinely impressive.

Share :

You May Also Like

The Modern Leader

The False Dichotomy A bad manager sacrifices people for numbers. A good manager sacrifices numbers for people.

Read More