May 9, 2023

Table of Contents

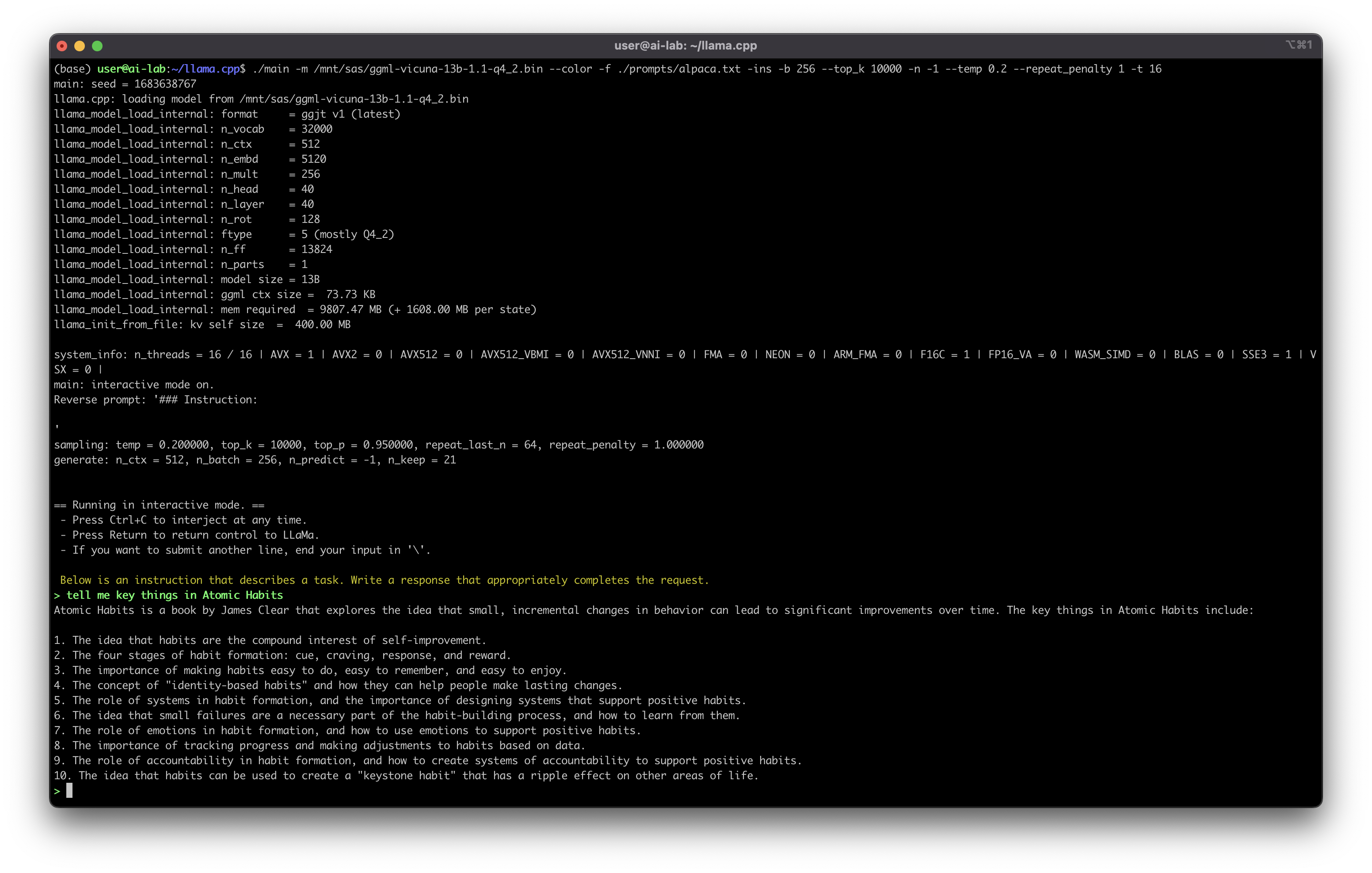

A few weeks ago, I started running my own version of ChatGPT on my homelab. The first attempt was Meta’s LLaMA model in its Alpaca-6B variant - and honestly, the response quality left a lot to be desired. It could string sentences together, but it felt like talking to someone who’d skimmed the textbook rather than studied it. I almost shelved the whole experiment.

Then I upgraded to Vicuna-13B, which claimed to deliver 92% of ChatGPT’s quality. The difference was immediately noticeable - coherent multi-turn conversations, sensible code suggestions, and responses that actually addressed what I asked instead of dancing around it. That upgrade changed my perspective on what’s possible with self-hosted AI.

Why Should Anyone Bother Self-Hosting?

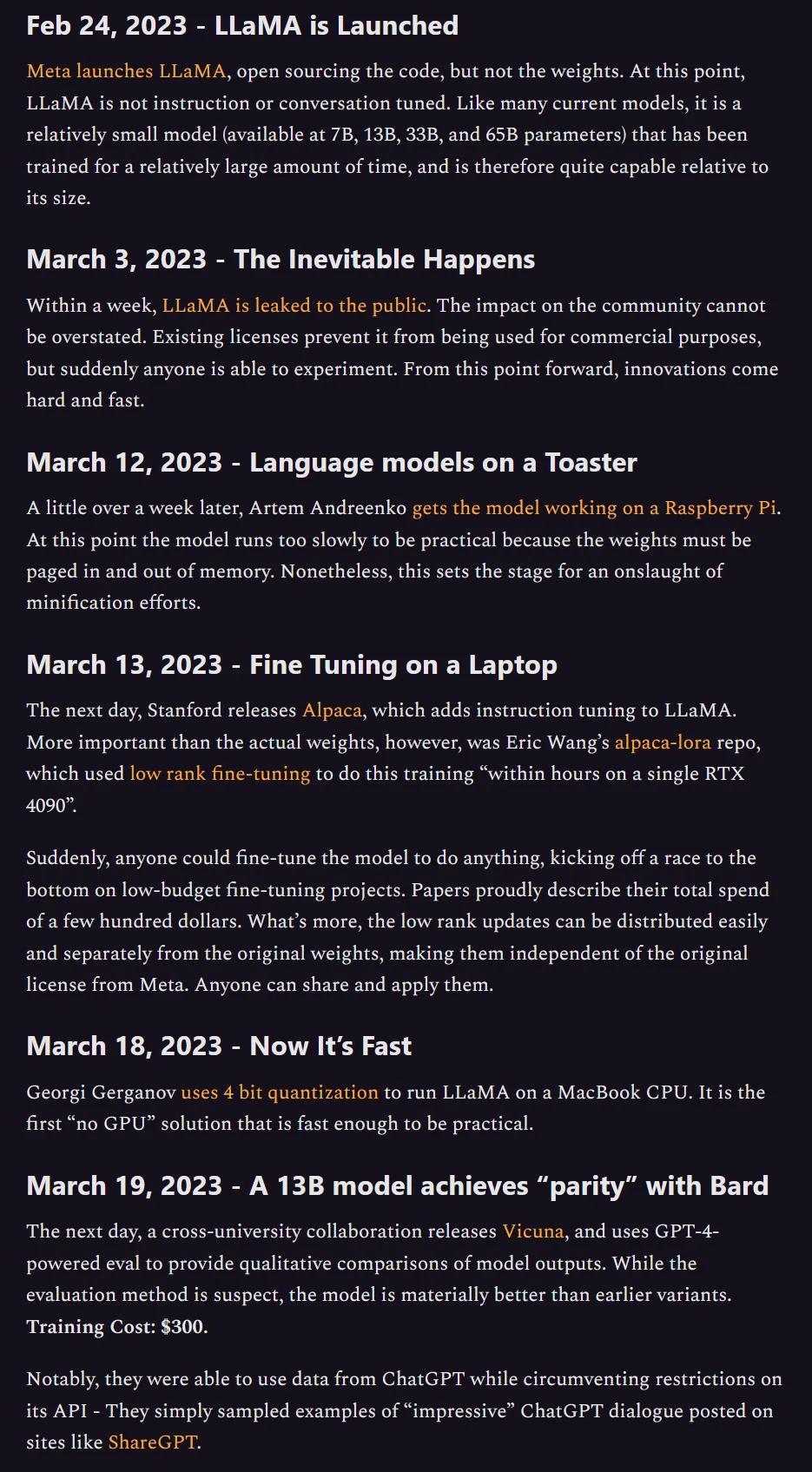

This model was mentioned in the now-famous leaked Google memo that argued open-source models were rapidly closing the gap with proprietary ones. The memo’s core thesis - that neither Google nor OpenAI has a lasting moat against open-source - has proven remarkably prescient.

The case for self-hosted LLMs isn’t theoretical for me - I run them daily. Here’s how the trade-offs actually play out:

| Advantage | Cloud ChatGPT | Self-Hosted |

|---|---|---|

| Data security | Data on third-party servers | Data never leaves your network |

| Customization | Limited to provider’s features | Full control over model, prompts, fine-tuning |

| Scalability | Determined by provider | Scale on your own terms |

| Cost model | Recurring subscription/API fees | One-time hardware + electricity |

| Availability | Dependent on provider uptime | You control uptime and maintenance |

| Privacy | Provider’s privacy policy applies | Your data, your rules |

The data security point deserves emphasis. With cloud-based ChatGPT, every conversation touches someone else’s infrastructure. For personal use, that may be acceptable. For anything involving proprietary business data, intellectual property, or sensitive personal information, it’s a genuine risk. According to Samsung’s internal investigation, employees had already leaked confidential source code through ChatGPT by early 2023 - a risk that simply doesn’t exist when the model runs on your own hardware. But if self-hosting is so appealing, what can you actually do with it?

What Are the Real Use Cases?

The generic “use AI for everything” advice isn’t helpful. Here are the use cases that I’ve found deliver real value - both on my homelab and in professional contexts.

For Individuals and Homelabbers

- Personal AI assistant - Automate daily tasks, manage schedules, draft communications. Running locally means your personal context stays private.

- Learning companion - Ask questions about complex topics, get explanations tailored to your level. No usage caps, no subscription fees.

- Creative collaborator - Generate character backstories, brainstorm ideas, or role-play scenarios with custom-configured characters and personalities.

For Organizations

- Technical support assistant - A 24/7 first-line responder for IT queries. Trained on internal documentation, it handles common questions and escalates edge cases. Self-hosted means your IT infrastructure details never leave the building.

- Knowledge management system - A centralized Q&A engine drawing from internal sources. Employees get instant answers without searching through wikis and Confluence pages.

- Business intelligence assistant - Real-time insights and trend analysis from company data. Leaders get actionable intelligence through natural language queries instead of waiting for analyst reports.

- Onboarding and training - An interactive assistant that guides new employees through processes and answers role-specific questions.

flowchart TD

A[Self-Hosted LLM] --> B[Personal Use]

A --> C[Organization Use]

B --> D[AI Assistant]

B --> E[Learning Companion]

B --> F[Creative Collaborator]

C --> G[Tech Support]

C --> H[Knowledge Management]

C --> I[Business Intelligence]

C --> J[Training & Onboarding]

So the use cases are compelling. But what does the experience of actually running these models teach you?

What Did I Actually Learn?

Running open-source LLMs on my own hardware taught me several things that you won’t find in the marketing materials:

| Lesson | What I Expected | What Actually Happened |

|---|---|---|

| Quality | Significant gap with ChatGPT | Gap narrows with every release - Vicuna-13B is genuinely usable |

| Hardware | Need expensive GPUs | A well-quantized 13B model runs comfortably on consumer hardware |

| Value | Generic chatbot replacement | Real value is in customization - fine-tuning and domain-specific knowledge bases |

| Effort | Set and forget | Requires more effort upfront, but long-term benefits in privacy, cost, and control are substantial |

The third point is worth dwelling on. A generic self-hosted LLM is interesting but not transformative. The moment you feed it your own documentation, configure custom system prompts for your specific workflow, or fine-tune it on domain-specific data - that’s when it stops being a toy and starts being a tool. The difference between “I have ChatGPT at home” and “I have an AI assistant that knows my systems” is the difference between a demo and a daily driver.

Key Takeaways

- Self-hosting is viable today. You don’t need a data centre - consumer hardware with a decent GPU handles quantized 13B models comfortably.

- Data privacy is the strongest argument. Every conversation with cloud AI touches someone else’s infrastructure. Self-hosted means your data never leaves your network.

- The real value is customization. Generic chatbots are interesting; domain-specific assistants fine-tuned on your data are transformative.

- Open-source is closing the gap fast. The leaked Google memo wasn’t hype - it was an accurate read of where the industry is heading.

Here’s a quick experiment: if you have any spare hardware sitting around - even a machine with 16GB of RAM - download Ollama and pull a small model. Spend 30 minutes asking it questions about a topic you know well. You’ll quickly see both the potential and the limitations, and that hands-on experience is worth more than any amount of reading about it.

Share :

You May Also Like

The Modern Leader

There’s a pattern I’ve seen play out more than once. A team ships a big release, leadership celebrates, and within weeks the strongest engineers start disengaging. They become quieter in …

Read More