March 29, 2025

Table of Contents

The Problem MCP Solves

Generative AI models like Gemini, Claude, and ChatGPT are incredibly smart - but they operate in a vacuum. They cannot see your files, query your databases, or interact with your tools. Getting AI to talk to your systems has traditionally meant building custom bridges for every single connection. Every tool, every data source, every API needed its own bespoke integration.

Anthropic’s open-source Model Context Protocol (MCP) changes this by creating a standardized way for AI models and digital tools to communicate. I run MCP extensively on my homelab - connecting my AI agents to local files, web search, GitHub, and databases through a single protocol instead of a dozen custom integrations.

TL;DR: MCP is the USB standard for AI. It lets models connect to data sources and tools (local files, GitHub, databases, web search) using a single protocol. Your AI gets real-time, relevant information without developers building custom connections for every tool - and without handing sensitive API keys to the AI provider.

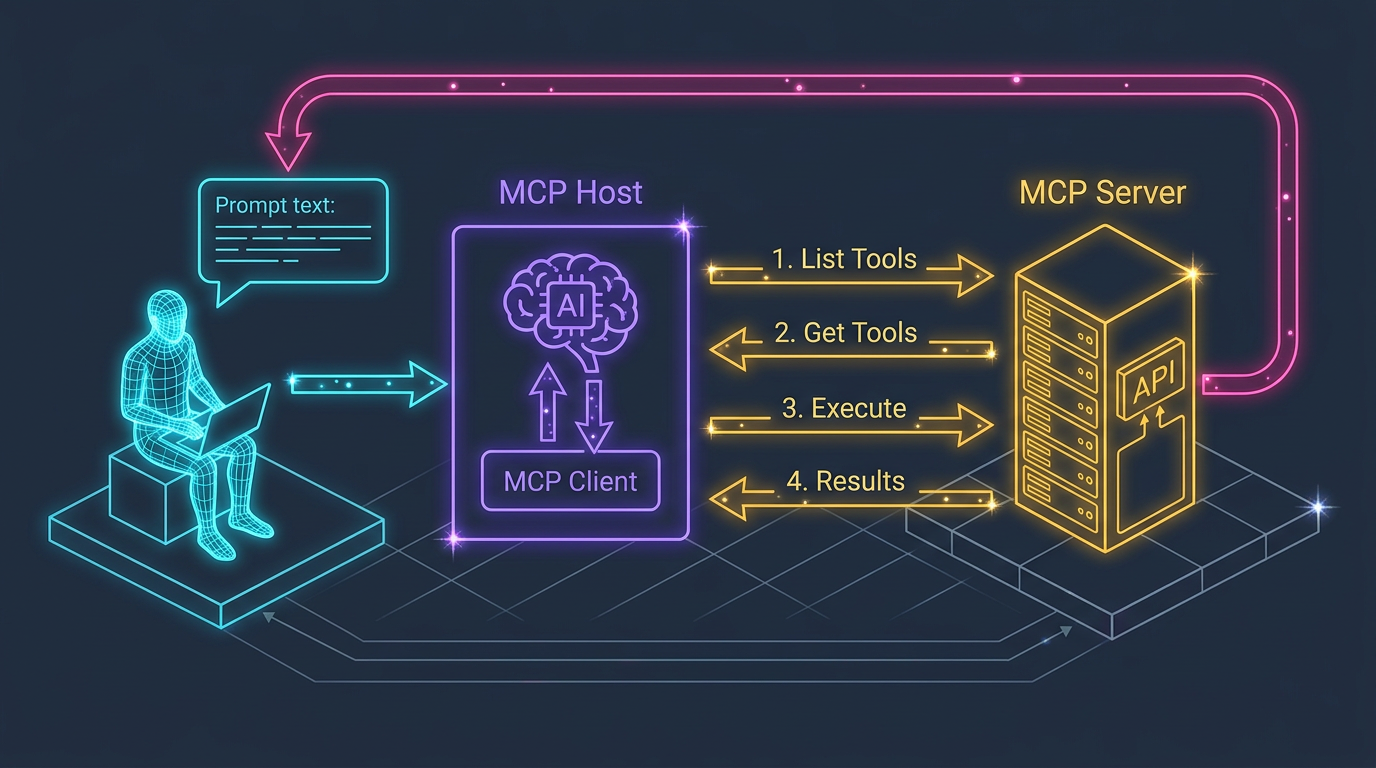

How It Works

flowchart TD

U[User] -->|Query| H[MCP Host]

H -->|Forwards| A[AI Agent]

A -->|1/ List tools| C[MCP Client]

C -->|Request| S[MCP Server]

S -->|2/ Available tools| C

A -->|3/ Execute tool| C

C -->|Request| S

S -->|4/ Calls API| T[External Tool / Data]

T -->|Results| S

S -->|5/ Returns results| C

C -->|Context enriched| A

A -->|Smart answer| U

The architecture has three core components:

| Component | Role | Example |

|---|---|---|

| MCP Host | Environment where the AI application runs | Claude desktop app, Agent Zero |

| MCP Client | The messenger within the AI app that speaks MCP | Built into the host |

| MCP Server | Manages access to specific tools or data sources | File system server, GitHub server, Brave search server |

A Walkthrough

Here is what happens when you ask an MCP-powered AI: “What is great about Google’s latest Gemini 2.5 Pro?”

- Your question enters the MCP Host

- The AI Agent recognizes it needs current information

- It asks the MCP Server: “What tools do you have?” - gets back a list including

brave_web_search - It requests: “Search for Gemini 2.5 Pro features, give me 5 results”

- The MCP Server securely calls the Brave Search API and returns results

- The AI combines your original question with the fresh search results

- You get a detailed, up-to-date answer

The key security insight: the MCP Server controls its resources. Your API keys never touch the AI model provider - the server handles authentication independently.

Why MCP Matters

Versus Traditional AI Tool Integration

| Aspect | Traditional Integration | MCP |

|---|---|---|

| Integration effort | Custom code per tool | Integrate once with MCP |

| API key exposure | Keys shared with AI provider | Keys stay with MCP Server |

| Tool discovery | Hardcoded | Dynamic - AI discovers available tools |

| Standardization | Proprietary per platform | Open standard |

| Scaling tools | Linear effort increase | Near-zero marginal cost |

From My Homelab

On my setup, I run multiple MCP servers: one for local file access, one for web search, one for GitHub, and one for deep documentation research. My AI agents can dynamically discover and use any of these without me writing a single line of glue code per tool. When I add a new MCP server - say, for Jira or calendar access - every agent in my system can immediately use it.

The Trade-Offs

Strengths:

- Radically simplifies AI-to-tool integration

- Keeps credentials secure - the server manages access, not the AI provider

- Extensible with Prompts, Tools, and Sampling features

- Handles diverse data: files, database records, API responses, images, logs

Challenges:

- Success depends on widespread adoption (network effect problem)

- Initial server setup can be non-trivial for less technical users

- A poorly configured server could become a performance bottleneck or security risk

- The standard is still evolving - expect breaking changes

Practical Applications

MCP is not theoretical. Here are patterns I have seen work:

- Code Development Workflows - Instruct your AI to create a GitHub repo, write boilerplate, create branches, and push code - all within a single conversation

- Intelligent Data Queries - “Query our SQLite database for customers who ordered product X last month” - MCP enables secure interaction with local data

- Internal Knowledge Assistants - Build an AI that queries company databases, checks Jira, summarizes Slack conversations, and accesses internal docs through one interface

- Automated Orchestration - Connect business tools so AI can coordinate tasks across platforms based on natural language instructions

Key Takeaway

MCP is a significant step towards making AI more practical and trustworthy. By providing a standardized, secure bridge between AI models and the vast universe of data and tools, it promises to unlock new levels of automation. While still evolving, it represents a compelling vision for how humans and AI can collaborate more effectively - letting AI step out of its sandbox and interact meaningfully with our working world.

Share :

You May Also Like

Transforming Industries with Text and Image Generative AI

A Real-World Example Consider a scenario that illustrates where generative AI delivers genuine value. An online grocery platform wants to help busy professionals eat healthier. Using text generation, …

Read More

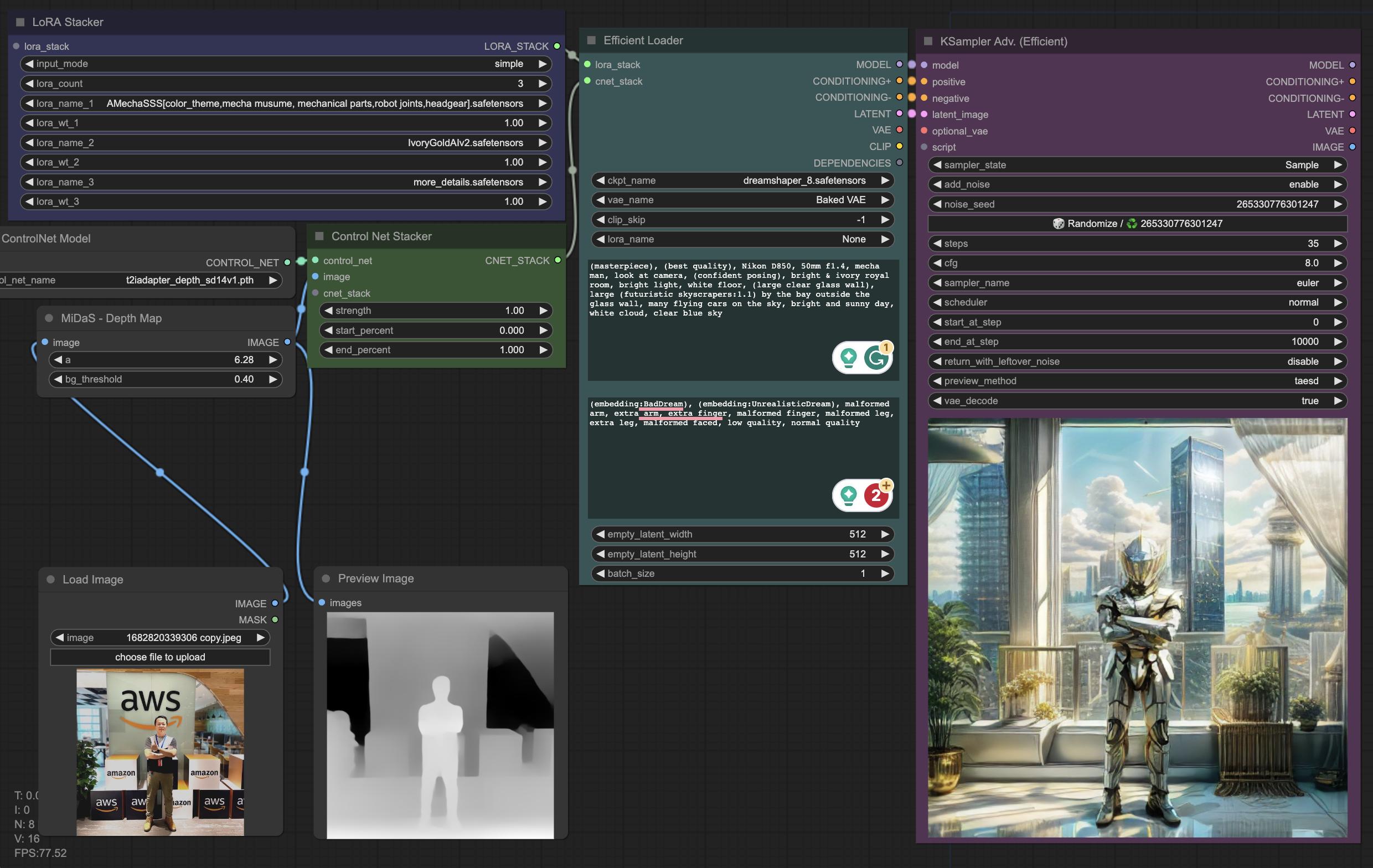

Fun Things To Do With Self-Hosted Generative AI

Weekend Experiments Weekends are my time to explore. My homelab runs a full generative AI stack - models, workflows, UIs - and I use that freedom to experiment with things that commercial services …

Read More